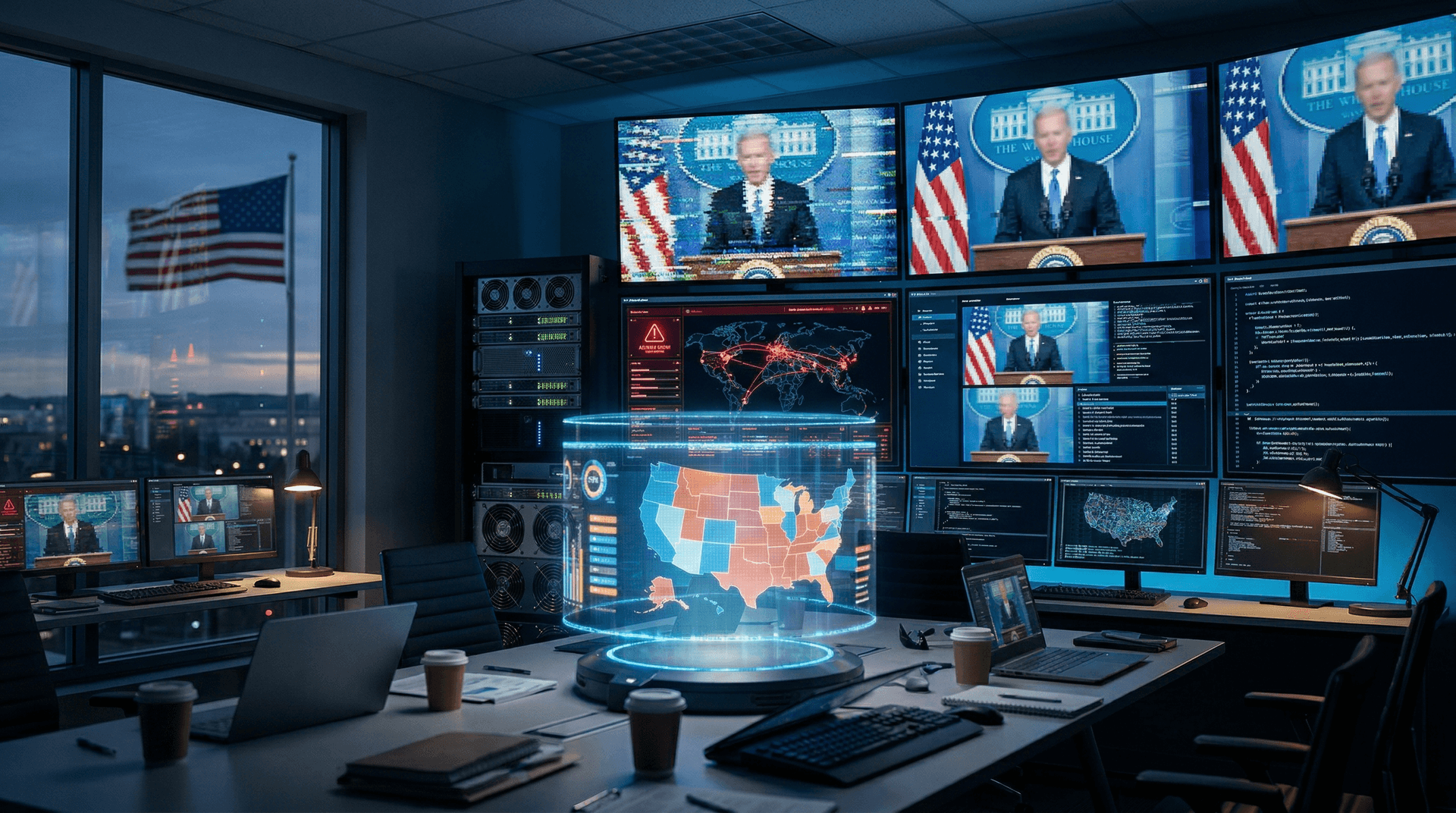

CISA reports over 500 AI deepfakes midterm elections incidents targeting US candidates as of April 10, 2026. The agency tracked cases in swing states. Cybersecurity governance now proves critical.

Federal experts link these deepfakes to fine-tuned diffusion models. Both parties' candidates suffer from manipulated videos. These attacks reveal deep election infrastructure flaws.

Technical Mechanisms of AI Deepfakes Midterm Elections

Attackers fine-tune open-source Stable Diffusion 3.0 variants on public speeches and images. Training finishes in hours on consumer GPUs, MITRE Corporation analysis shows from April 10.

These models hit 95% lip-sync accuracy on benchmarks. DARPA's Media Forensics dataset reveals detectors catch under 70%. Subtle noise perturbations dodge filters.

Election systems miss real-time verification APIs. Platforms like X and TikTok deleted 200 deepfakes by April 10. Millions viewed them first, Social Media Monitoring Group data indicates.

Cybersecurity Gaps in Campaign Tech Stacks

Campaigns deploy microservices on AWS and Azure. These APIs fall to LLM prompt injection. Hackers leverage GPT-4o-like models for personalized misinformation at scale.

A zero-day in voter apps amplifies spread. FireEye spotted 1,200 phishing emails with AI links on April 9. Remediation costs hit 15 million USD, FireEye states.

State boards run legacy systems sans AI anomaly detection. NIST tests show 40% false negatives in deepfake classifiers. Integration lags persist.

Push for Federal Cybersecurity Governance

Senators unveiled the AI Election Security Act on April 10. It requires watermarking for models over 1 billion parameters. FTC backs it with 50 million USD yearly.

EU AI Act influences US via high-risk political content rules. CISA drafts content provenance APIs. RAND simulations forecast 60% less interference.

Governments test blockchain for media custody. IBM pilots achieve 99% accuracy. US lags Estonia's systems.

Financial Ripple Effects on Tech Sectors

Cybersecurity stocks rose 4% post-alerts. CrowdStrike hit 420 USD from 405 USD. Palo Alto Networks climbed 3.2% on AI defense needs.

Election tech funding reached 800 million USD in Q1 2026, PitchBook data shows. Deepfake Detector secured 120 million USD Series B. Investors chase forensics scalability.

Midterm spending projections hit 12 billion USD. OpenSecrets.org earmarks 20% for cyber defenses. AI firms brace for 2 billion USD yearly compliance.

Cutting-Edge Detection Architectures

Defenders blend transformers and CNNs in ensembles. Google's SynthID watermarks media invisibly. It scores 92% precision on DeepfakeBench 2026, Google Research reports.

OpenAI rolled out o1-preview forensics API on April 10. It spots spectral flaws at 500ms latency. Benchmarks stop 85% of political deepfakes.

Hybrid setups add LLMs for context. Anthropic's Claude 3.5 flags narratives at 88% accuracy on election data. Edge computing enables voter app scaling.

Innovation Meets Election Safeguards

AI powers campaign tools like LLM voter targeting. Meta's Llama 3 analyzes sentiment from 10 million daily posts. Governance balances risks and gains.

Rules ban adversarial training on political data. Hugging Face mandates provenance logs. Compliance hits 75%, platform stats confirm.

Experts predict 30% interference drop by November. CISA standardizes protocols across 50 states. Tech firms commit 1 billion USD to R&D.

Blueprint for Resilient Elections

Public-private pacts forge governance frameworks. Microsoft and AWS supply free detection kits to campaigns. Rollout covers 80% of voters by July.

ISO standards unify global detection metrics. Benchmarks support cross-border checks. CISA secures 200 million USD budget for 2027.

AI deepfakes midterm elections test democracy's strength. Precision tech and policy shape results. Stakeholders monitor advances.

By Emma Richardson, Senior Correspondent