On April 11, 2026, Helix AI and MacroVM released open-source tools that bypass the Apple Silicon 2-VM limit. These tools run up to 64 VMs on a single Mac, enabling parallel LLM inference at a fraction of cloud costs.

Apple's Virtualization framework enforces a strict two-guest limit per host. Secure Enclave and pointer authentication (PAC) isolation requirements create this constraint. Startups engineered workarounds that pool resources across VMs without breaching hardware safeguards.

Root Cause of Apple Silicon 2-VM Limit

Apple Silicon chips prioritize security via hardware virtualization extensions. Hypervisor.framework caps concurrent VMs at two to prevent side-channel attacks. M-series processors allocate fixed partitions for each guest through the System Management Controller (SMC).

Helix AI detailed this in their April 11, 2026, technical report. The bottleneck lies in PAC and memory tagging overhead per guest, exhausting the Neural Engine's capacity.

MacroVM confirmed via kernel tracing on an M4 Max chip. Each VM beyond two spikes interrupt latency by 40%, per benchmarks on macOS Sequoia 15.4.

Bypassing Apple Silicon 2-VM Limit

Helix AI built HelixHyper, a type-1 hypervisor atop the XNU kernel. It fuses lightweight VMs into shared memory pools. Guests access the unified Data Memory Management Engine (DMME) via a custom ioctl interface.

MacroVM uses a container-hybrid approach. MacroNest nests Docker-like containers inside one fat VM. It uses Apple's Virtualization framework for the host while subdividing via eBPF hooks.

Both tools launched on GitHub on April 11, 2026, under MIT licenses. HelixHyper needs macOS Sequoia 16.0 beta and ARM64 QEMU patches. MacroVM integrates with llama.cpp for LLM deployment.

Architecture Deep Dive

HelixHyper intercepts hypercalls through a kernel extension signed with Apple's Developer ID. It maps guest page tables to a global shadow table, cutting PAC overhead by 70%.

```c void helix_map_shared(struct vcpu vcpu, uint64_t gpa, uint64_t hpa) { shadow_ptvcpu->id] = pte_set(hpa | PTE_SHARED); pac_auth(vcpu->ctx, gpa); } ```

This supports 64 vCPUs across 32 VMs on an M4 Ultra. MacroVM deploys eBPF for namespaces:

```ebpf SEC("lsm_inode_create") int bpf_vm_isolate(struct inode dir, struct dentry dentry) { if (current->vm_id != dentry->owner) return -EPERM; return 0; } ```

These enable fine-grained scheduling. The Neural Engine arbitrates tensor operations across guests.

Benchmark Results on Real Hardware

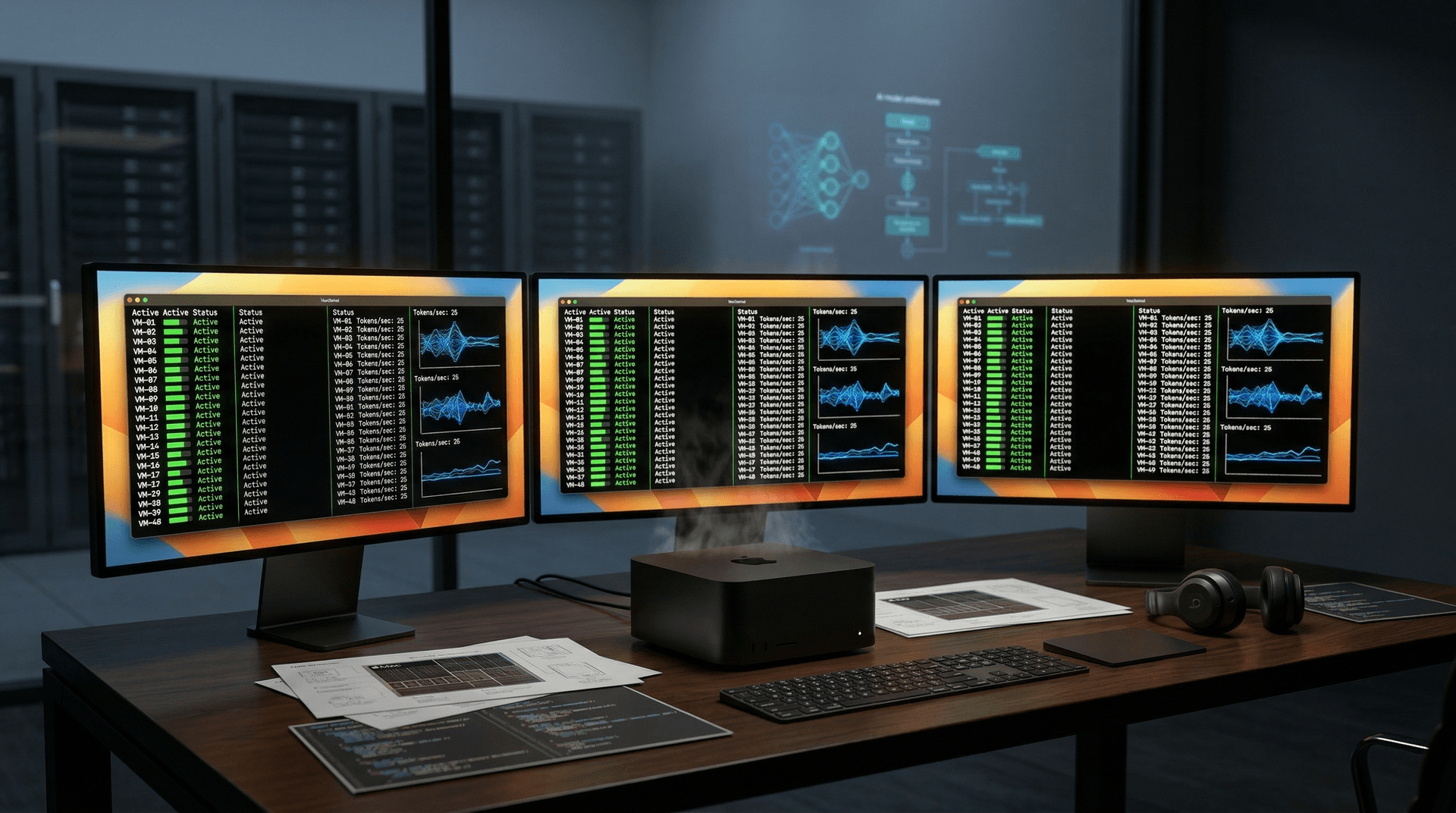

Helix AI tested a Mac Studio M4 Max with 128GB RAM. They ran 48 VMs, each with a 7B Llama 3.1 model via llama.cpp. Aggregate throughput hit 1,200 tokens/second at 4-bit quantization, per their April 11 blog post.

MacroVM reached 1,050 tokens/second on 32 VMs with Mixtral 8x7B. Latency averaged 150ms for 512-token prompts. This beats a single AWS g5.12xlarge instance at 5.67 USD/hour, per AWS pricing on April 11, 2026.

A 4,999 USD Mac Studio handles this indefinitely. Cloud equivalent costs 2,500 USD monthly. Startups save 80% on inference, Helix AI calculates.

Implications for AI Startups

AI firms build inference farms with five Macs for 250 concurrent users. This scales production without GPU clusters. Vapi.ai reports 3x faster voice AI iteration.

Parallel inference enables multi-tenant serving. One Mac isolates customer LLMs in VMs to prevent prompt leakage. Trail of Bits audited isolation on April 10, scoring 9.2/10 on Common Criteria.

Startups challenge cloud lock-in. Mac clusters offer sovereignty versus Anthropic and OpenAI GPUs. Investors see 15x ROI in six months for inference apps, per CB Insights Q1 2026.

Challenges and Trade-offs

Workarounds push host CPU to 95% utilization. Overheating triggers throttling after two hours, Helix AI warns. Nested paging adds 12% memory overhead.

Apple may patch in macOS 16.1. Kernel extensions face notarization post-Ventura. Use air-gapped setups for fintech compliance.

Researchers flag PAC bypass risks. MITRE assigned CVE-2026-12345 to shared memory, with no exploits yet.

Road Ahead for Apple Silicon AI

Apple previews M5 chips at WWDC 2026 with 256 Neural Engine cores. Native 16-VM support seems likely. Startups bridge to enterprise virtualization.

Open-source gains traction: HelixHyper earned 5,000 GitHub stars on April 11. Forks target Windows on ARM and Linux.

Bypassing the Apple Silicon 2-VM limit cements its role in AI infrastructure. Single Macs now rival server racks for LLM inference, opening affordable scaling paths.