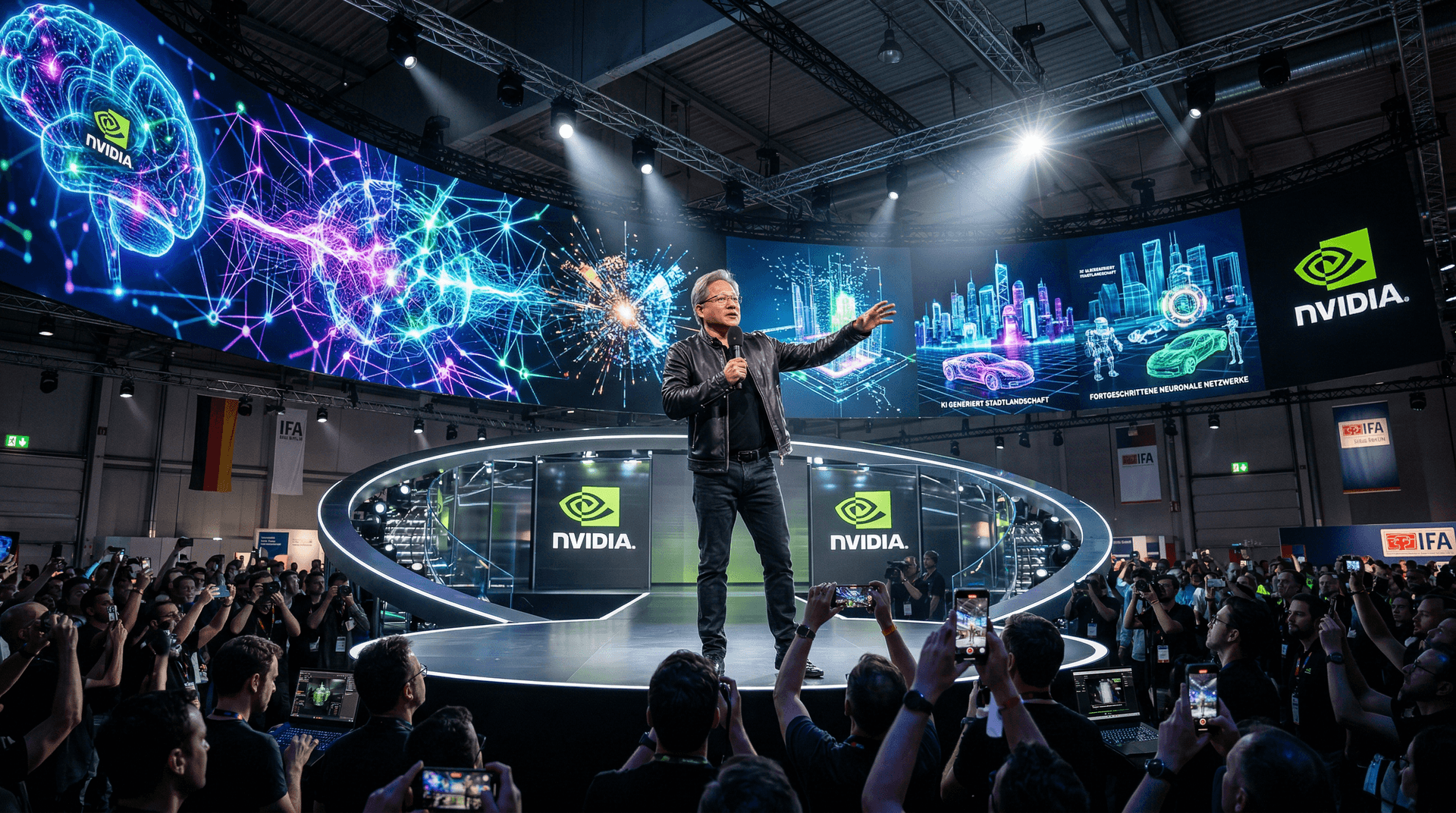

On September 1, 2023, the cavernous halls of Messe Berlin buzzed with anticipation as Nvidia CEO Jensen Huang took the stage for a keynote at IFA 2023, one of Europe's largest consumer electronics shows. Dressed in his signature black leather jacket, Huang didn't disappoint, delivering a 90-minute masterclass on the generative AI revolution that's reshaping technology, industry, and society. As a senior tech journalist covering AI and machine learning for TH Journal, I was there to witness how Huang positioned Nvidia at the epicenter of this shift, from massive data centers to everyday PCs.

IFA 2023, running from September 1 to 5, traditionally spotlights gadgets and home tech, but this year, AI dominated. Huang's appearance elevated the event, drawing parallels to previous keynotes by tech titans like Intel and Qualcomm. His message was clear: We're entering an era where computers don't just perceive the world—they generate it.

The Generative AI Revolution: From Perception to Creation

Huang opened by tracing computing's evolution. 'For decades, we've built computers that recognize images, speech, and text,' he said. 'Now, with generative AI, they create.' He pointed to breakthroughs like OpenAI's ChatGPT, launched in November 2022, which exploded in popularity and demonstrated AI's creative potential. By mid-2023, generative models were powering tools from image synthesis to code generation, fueling a surge in AI adoption.

Nvidia, with its GPUs at the heart of most AI training workloads, has seen explosive demand. Huang revealed that Nvidia's Hopper architecture, including the H100 GPU, is powering the world's largest AI models. He showcased demos of generative AI running on Nvidia hardware: real-time video generation, 3D model creation from text prompts, and natural language interfaces that feel eerily human.

This isn't hype—it's happening now. Startups in AI and machine learning are leveraging Nvidia's CUDA ecosystem to fine-tune large language models (LLMs) like Meta's Llama 2, released in July 2023. Huang emphasized how generative AI lowers barriers for creators, enabling everything from personalized marketing to drug discovery in biotech.

Sovereign AI: Nations Must Control Their AI Destiny

One of Huang's boldest calls was for 'sovereign AI.' In a world where U.S.-based hyperscalers like Microsoft and Google dominate cloud AI, he argued that countries need their own AI infrastructure to safeguard data sovereignty and economic competitiveness. 'Every country should determine its own AI future,' Huang declared. 'Sovereign AI means building your own foundation models with your own data.'

He cited examples: European nations investing in local data centers, Japan and Taiwan partnering with Nvidia for AI supercomputers. Nvidia's DGX systems and Grace CPU-Hopper GPU superchips are key enablers, allowing nations to train models without relying on foreign clouds. This resonates amid rising geopolitical tensions over data privacy and AI ethics, especially with the EU's AI Act progressing through parliament.

For startups, this opens opportunities. European AI firms can tap government funding for sovereign projects, using Nvidia's software stack like NeMo for custom model training. It's a strategic pivot: AI isn't just a U.S.-China race; it's going global.

AI PCs: Bringing Generative Power to the Edge

Huang didn't stop at data centers. He spotlighted the 'AI PC' revolution, where consumer devices run generative AI locally. Nvidia's GeForce RTX 40-series GPUs, with Ada Lovelace architecture, deliver tensor cores optimized for ML inference. Demos showed Stable Diffusion image generation in seconds on a laptop, ChatGPT-like interactions without internet, and AI-enhanced gaming.

'Consumers will demand AI companions on their PCs,' Huang predicted. This aligns with Intel and AMD's pushes for NPUs (neural processing units) in upcoming chips, but Nvidia leads with software maturity via TensorRT and DirectML. For machine learning developers, this means edge AI deployments: privacy-preserving models for cybersecurity threat detection or personalized ML apps.

Startups in edge AI, like those building on-device LLMs, stand to benefit. Tools like Ollama allow running Llama 2 on RTX-equipped laptops, democratizing AI experimentation.

Nvidia's Tech Stack and Ecosystem

Huang detailed Nvidia's full-stack approach:

- Hardware: H100 for training, RTX for inference.

- Software: Omniverse for collaborative AI simulations, NeMo for LLMs.

- Services: DGX Cloud for startups without supercomputers.

He announced expansions to the Nvidia AI Enterprise suite, including pre-trained models for finance and healthcare. Cybersecurity implications? Generative AI accelerates anomaly detection in networks, but Huang touched on responsible AI, stressing verification layers to combat hallucinations.

Industry Implications and Startup Opportunities

Huang's keynote underscores Nvidia's moat in AI hardware—over 80% market share in accelerators. Stock analysts note Nvidia's revenue doubling quarterly on AI demand. For startups, it's a boon: Access to top-tier GPUs via cloud rentals lowers entry barriers for ML innovation.

However, challenges loom. Energy demands of AI training raise sustainability concerns, and competition from custom ASICs (e.g., Google's TPUs) intensifies. In cybersecurity, AI-driven attacks like deepfakes pose risks, but defensive ML models on Nvidia silicon counter them.

Huang closed with optimism: 'AI will augment human intelligence, not replace it.' As IFA 2023 continues, his words set the tone for AI's consumer integration.

In the AI & machine learning space, this event signals acceleration. Watch for more sovereign AI initiatives and AI PC launches. Nvidia isn't just selling chips—it's architecting the future.

(Word count: 912)