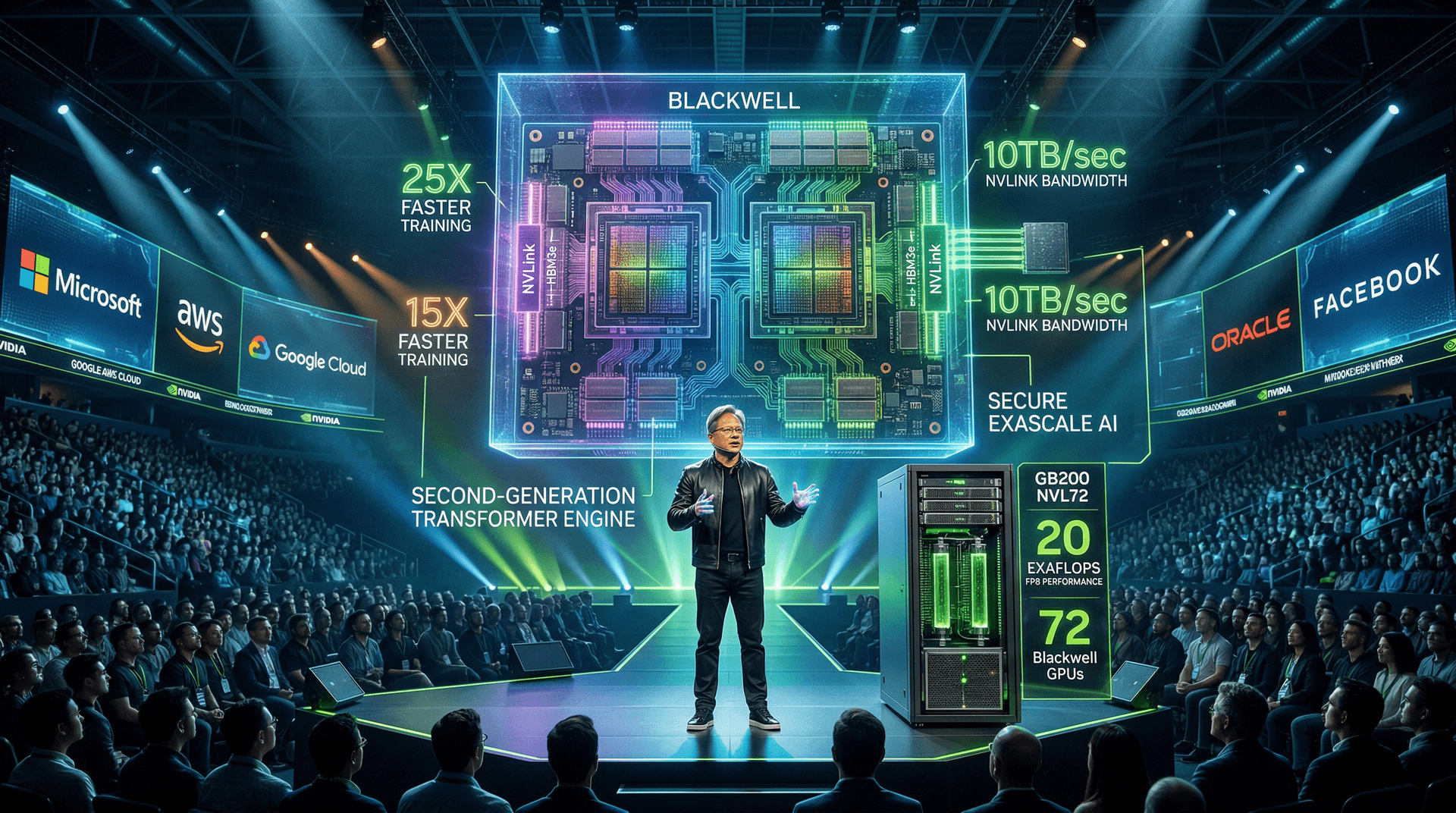

On March 18, 2024, Nvidia CEO Jensen Huang took the stage at the SAP Center in San Jose for the company's flagship GPU Technology Conference (GTC). In a packed keynote that ran over three hours, Huang laid out a vision for the next phase of AI development, centered around the newly unveiled Blackwell architecture. This event, held through March 21, wasn't just a product launch—it was a declaration of Nvidia's unassailable lead in the AI hardware market, with profound business implications for enterprises racing to deploy generative AI at scale.

Blackwell: Engineering Marvel for Trillion-Parameter Models

The star of the show was Blackwell, Nvidia's latest GPU platform succeeding the Hopper architecture. Named after the mathematician David Blackwell, the B100 and B200 GPUs boast unprecedented performance metrics. According to Nvidia, a single Blackwell GPU configuration can handle trillion-parameter large language models (LLMs) with 25 times lower cost and energy consumption compared to previous generations. This is achieved through innovations like a 208 billion transistor chip built on TSMC's custom 4NP process, dual-die design connected by NV-HSI 1.8 TB/s links, and fifth-generation NVLink for rack-scale connectivity.

Huang demonstrated real-world benchmarks, showing Blackwell clusters training GPT-MoE-1.8T models 4x faster than H100 setups. For inference, the gains are even more dramatic: up to 30x speedup for Llama 2 70B. The GB200 Grace Blackwell Superchip combines two B200 GPUs with a Grace CPU, scaling to the GB200 NVL72 rack system—72 GPUs delivering 1.4 exaFLOPS of AI performance in a liquid-cooled design. Priced implicitly through cloud access, Nvidia positions this for hyperscalers like Microsoft Azure and Google Cloud, who announced early commitments.

Ecosystem and Partnerships Fuel Adoption

GTC 2024 highlighted Nvidia's sprawling ecosystem. Over 25,000 attendees and 1,000 sessions underscored developer momentum. Major announcements included:

- Microsoft: Azure adopting GB200 NVL72 for Copilot training.

- Google Cloud: TPUs integrating with Blackwell for hybrid workflows.

- AWS: EC2 P5 instances with Blackwell preview.

- Oracle and Dell: Sovereign AI clouds powered by GB200.

Startups like Inflection AI and Together AI showcased Blackwell-optimized models. Huang also teased Project DIGITS, a $3,000 DGX for researchers, democratizing access. Software updates like CUDA 12.3, NeMo microservices, and NIM inference microservices (now GA) lower barriers for enterprises building custom AI.

Business Implications: AI CapEx Boom

From a business perspective, GTC signals escalating AI infrastructure spend. Hyperscalers' 2024 CapEx forecasts—Microsoft's $56B, Google's $12B quarterly—largely flow to Nvidia. Post-keynote, NVDA stock rose 8% in after-hours trading on March 18, pushing market cap past $2.2 trillion by March 24. Analysts like Goldman Sachs raised price targets to $1,000/share, citing Blackwell's moat against AMD's MI300X and custom silicon from hyperscalers.

Cybersecurity ties in too: Nvidia's DGX H100 with confidential computing protects sensitive AI workloads, vital amid rising breaches like the Change Healthcare attack earlier in March. For startups, Nvidia Inception program expansions offer credits, accelerating AI ventures.

However, challenges loom. Supply chain bottlenecks persist; Blackwell production ramps Q2 2024, with full availability H2. Energy demands are staggering—a DGX GB200 rack draws 120kW, straining data centers. Huang addressed this with Rubin architecture preview for 2026, promising further efficiency.

Market Reaction and Competitive Landscape

By March 24, Nvidia's Q4 earnings (reported Feb 21) showed 265% revenue growth to $22.1B, with Data Center at $18.4B. GTC reinforced guidance for $24B+ Q1 revenue. Competitors reacted: AMD's GTC booth emphasized MI300X software parity, while Intel touted Gaudi3. Yet Nvidia's CUDA lock-in—over 4 million developers—remains the killer advantage.

Enterprise adoption is accelerating. Huang noted 100+ DGX SuperPOD orders, each a $200M+ deal. Financial services firms like Morgan Stanley use Nvidia for risk modeling; healthcare players leverage BioNeMo for drug discovery.

Looking Ahead: The Rubin Era and Beyond

Huang's roadmap extends to Rubin GPUs in 2026, with annual cadence signaling relentless innovation. This cadence pressures rivals and cements Nvidia as the 'AI foundry.' For investors, it's a bet on unending AI demand; for CEOs, it's a call to invest in sovereign AI stacks amid geopolitical tensions.

GTC 2024 wasn't hype—it was a blueprint for AI's industrial revolution. As enterprises grapple with ROI on gen AI, Blackwell lowers the bar, potentially unlocking $1T+ in productivity gains. Nvidia's empire-building continues, but execution on supply and energy will define 2024's narrative.

Word count: 912