On May 13, 2024, OpenAI dropped a bombshell in the AI world with the launch of GPT-4o, its most advanced model to date. Pronounced 'o' as in 'omni,' this flagship release represents a leap forward in multimodal AI, seamlessly integrating text, audio, vision, and even emotional intelligence. Priced at half the cost of GPT-4 Turbo while delivering superior performance, GPT-4o is poised to reshape how developers, businesses, and consumers interact with artificial intelligence.

The 'Omni' Effect: What Makes GPT-4o Special?

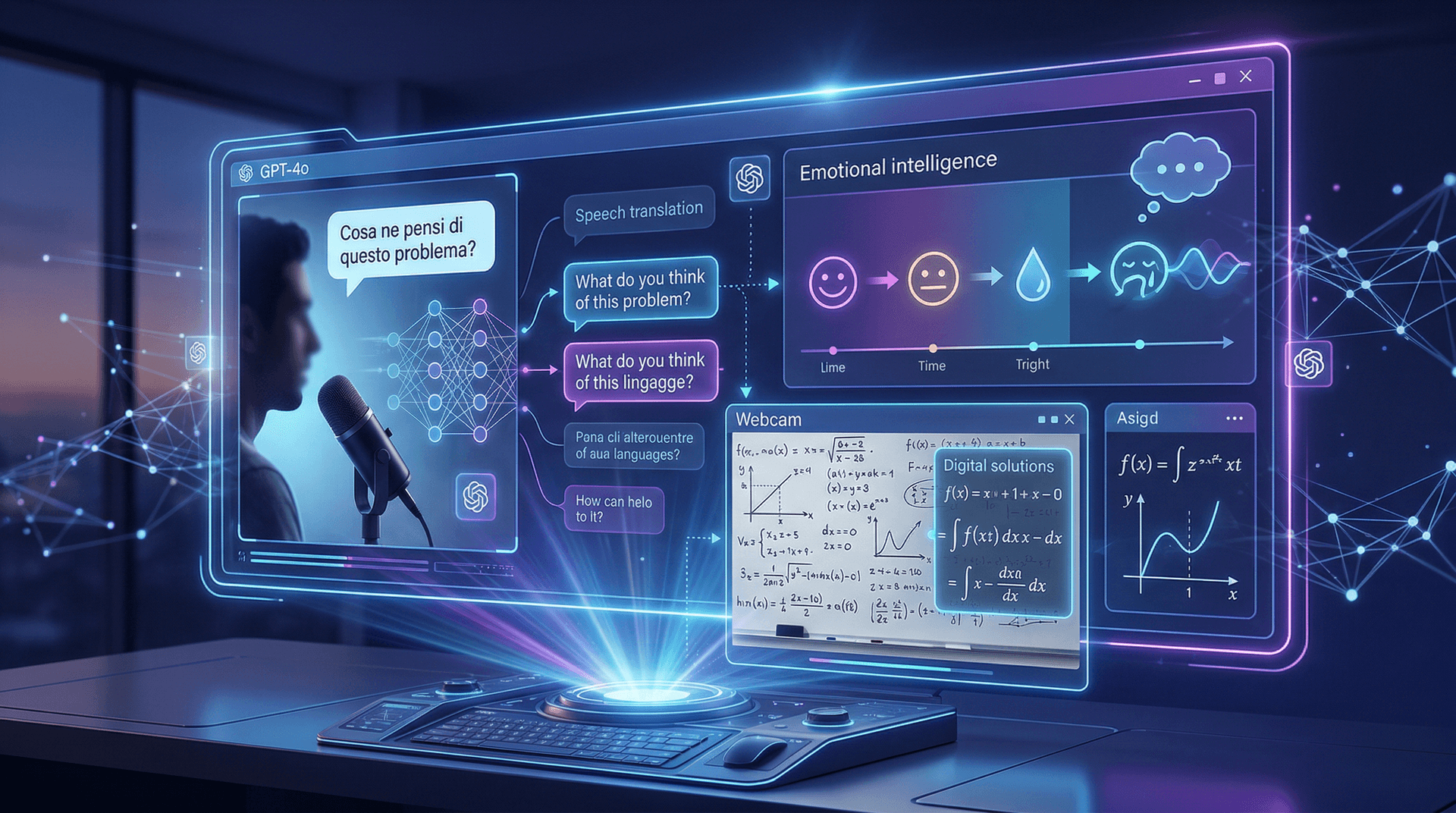

GPT-4o stands for Generative Pre-trained Transformer 4 Omni, emphasizing its end-to-end natively trained capabilities across multiple modalities. Unlike previous models that relied on separate systems for different inputs, GPT-4o processes audio, vision, and text in a single neural network. This unified architecture results in dramatically faster response times—232 milliseconds from voice input to output on average, rivaling human conversation speeds.

During the live demo streamed from OpenAI's San Francisco headquarters, CEO Sam Altman and the team showcased jaw-dropping feats. The model translated spoken Italian into English in real-time while maintaining the speaker's expressive tone and cadence. It solved a complex math problem on a whiteboard via camera input, explaining steps aloud with natural inflection. GPT-4o even detected subtle emotions, shifting to a soothing voice when a user pretended to cry, demonstrating emergent 'emotional intelligence.'

Benchmarks underscore its prowess. GPT-4o scores 88.7% on GPQA Diamond (PhD-level science), 87.2% on MMLU-Pro, and leads in coding (LiveCodeBench) and math (AIME 2024). In vision tasks like MMMU, it hits 69.1%, and multilingual support spans 50+ languages with native-like fluency.

Pricing and Accessibility: Democratizing Advanced AI

One of the most exciting aspects is accessibility. Input tokens cost $2.50 per million (text/vision) and $5 per million for audio, with outputs at $10 and $15 respectively—half of GPT-4 Turbo rates. Free tier users get 10x more messages via ChatGPT, while Plus and Team subscribers enjoy even higher limits starting immediately.

"GPT-4o is our best model for chat yet," Altman tweeted post-announcement. It's now powering ChatGPT voice mode worldwide, replacing Whisper and TTS pipelines for snappier, more natural interactions. Developers can access it via API today, with broader rollouts—including desktop app voice—coming weeks ahead.

Under the Hood: Technical Innovations

Trained on massive datasets with reinforcement learning from human feedback (RLHF), GPT-4o pushes boundaries in context length (128K tokens) and speed. Its tokenized audio input/output enables low-latency conversations without transcription delays. Vision capabilities, powered by an upgraded understanding of images and real-world scenes, excel in tasks like object recognition, spatial reasoning, and creative generation.

Safety remains paramount. OpenAI classified GPT-4o as 'Level 2' DALL-E risk (low) and implemented automated/as-needed human monitoring for voice. Upcoming features like realtime API and video input promise further expansions, but the company emphasized responsible scaling.

Competitive Landscape and Industry Impact

This launch comes amid fierce rivalry. Just a day later, on May 14, Google I/O countered with Gemini 1.5 Pro updates, Veo video generation, and Imagen 3. However, GPT-4o's real-time multimodal edge sets it apart, challenging incumbents like Anthropic's Claude 3 and Meta's Llama 3 (launched April 18).

For startups and enterprises, GPT-4o lowers barriers to AI integration. Imagine voice-enabled customer service bots that empathize, AR apps with instant scene analysis, or coding assistants that 'see' your screen. In education, healthcare, and creative industries, its versatility could accelerate innovation.

Critics note lingering issues: hallucinations persist, though mitigated; energy demands are high; and voice mode's uncensored nature raises misuse concerns (e.g., celebrity voice clones). OpenAI plans phased rollouts to teams and enterprises first for safety testing.

Future Horizons: What's Next for OpenAI?

Roadmap teases excite: enhanced video models, broader canvas editing, and GPT-5 whispers. With Microsoft as a key backer, integrations into Azure and Office loom large. Altman hinted at 'operator' agents—autonomous AI workers—building on GPT-4o's foundations.

As a senior tech journalist, I've covered AI's evolution from GPT-3's hype to today's practical powerhouses. GPT-4o feels like the tipping point where AI becomes truly conversational and perceptive, blurring human-machine lines. Yet, ethical guardrails must evolve in tandem.

In the startup ecosystem, expect a flurry of GPT-4o-powered ventures. Cybersecurity firms could leverage its vision for threat detection; AI tutors for personalized learning. The finance angle? Algorithmic trading bots with real-time market voice analysis.

Conclusion: A New Chapter in AI

OpenAI's GPT-4o isn't just an upgrade—it's a paradigm shift. By making flagship performance affordable and instantaneous, it invites a broader audience to harness AI's potential. As we hit May 15, 2024, the ripples are just beginning. Developers, fire up those APIs; the future is speaking.

(Word count: 912)