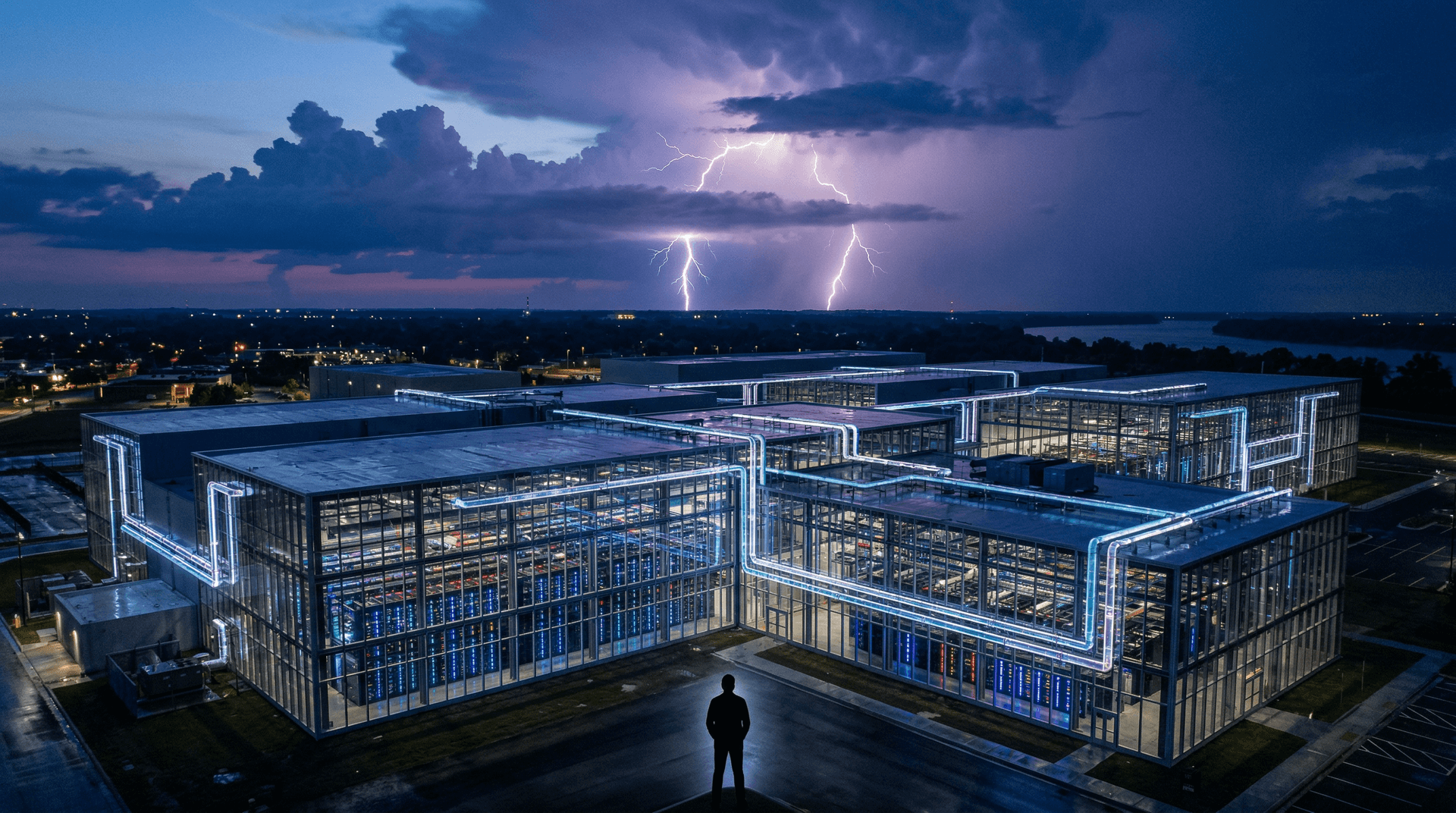

In a bold move that's sending shockwaves through the AI industry, Elon Musk's xAI has unveiled Colossus, the world's largest supercomputer cluster dedicated to artificial intelligence training. Announced on December 5, 2024, this behemoth packs 100,000 Nvidia H100 GPUs into a single facility in Memphis, Tennessee. Constructed in an astonishing 122 days, Colossus represents a quantum leap in compute infrastructure, underscoring xAI's aggressive push to dominate the generative AI race.

The Birth of Colossus: Speed and Scale Unmatched

xAI didn't just build a data center; they shattered records doing it. Partnering with Nvidia, Dell, and Supermicro, the team transformed a former Electrolux factory into a cutting-edge AI factory. Liquid-cooled for efficiency, the cluster delivers exaflop-scale performance tailored for training massive language models like the upcoming Grok-3.

"Colossus is already the most powerful AI training system in the world," Musk tweeted, highlighting its immediate deployment for Grok-2 refinements and Grok-3 pre-training. By comparison, Meta's largest cluster tops out at around 24,000 H100s, while OpenAI relies on Microsoft Azure with fewer GPUs per unified system. xAI's feat positions it ahead in raw compute firepower.

| Cluster | GPUs | Build Time | Location |

|---|---|---|---|

| xAI Colossus | 100,000 H100 | 122 days | Memphis, TN |

| Meta Llama | ~24,000 H100 | N/A | Multiple |

| Tesla Dojo | ~10,000 equiv. | Ongoing | Austin, TX |

| OpenAI (est.) | 100,000+ distributed | N/A | Azure DCs |

This table illustrates Colossus's dominance. The rapid deployment relied on pre-existing manufacturing capacity and Nvidia's high-volume GPU supply, which Musk credits for enabling the pace.

xAI's Mission: Truth-Seeking AI at Hyperscale

Founded in July 2023, xAI aims to "understand the true nature of the universe" through maximally truth-seeking AI. Unlike competitors focused on safety guardrails or commercial chatbots, xAI prioritizes uncensored, reasoning-heavy models. Grok-1 (open-sourced March 2024) and Grok-2 (August 2024) showcased multimodal capabilities and image generation via Flux.1 integration.

Colossus fuels Grok-3, expected by end-2024 or early 2025, trained on diverse datasets including real-time X (formerly Twitter) data. Musk claims it will surpass GPT-4o and Claude 3.5 Sonnet in benchmarks like math, coding, and vision. Early tests on Colossus have already boosted Grok-2's performance, hinting at Grok-3's potential as a frontier model.

The AI Arms Race Heats Up

This launch comes amid escalating competition. OpenAI's o1 reasoning models (September 2024) set new bars, but compute bottlenecks hinder iteration. Anthropic's Claude expansions and Google's Gemini 2.0 flash rely on similar GPU hunger. Startups like Mistral and Cohere scramble for capacity via cloud deals, but xAI's owned infrastructure gives it an edge.

xAI raised $6 billion in May 2024 at a $24B valuation, fueling Colossus. Plans call for expanding to 300,000 GPUs soon, incorporating Nvidia's Blackwell B200s for even greater efficiency. Memphis was chosen for cheap power (hydro from TVA), fiber connectivity, and local incentives, creating 300+ jobs.

Critics note environmental concerns: 100k H100s could consume 150-200 MW, rivaling small cities. xAI counters with liquid cooling (30% more efficient) and renewable commitments. Nvidia CEO Jensen Huang praised the project, signaling deeper ties.

Technical Deep Dive: Powering Next-Gen ML

Colossus optimizes for transformer training at scale. H100s, with 80GB HBM3e memory and FP8 precision, excel in mixture-of-experts (MoE) architectures like Grok's. The cluster uses Nvidia's NVLink for low-latency interconnects, enabling trillion-parameter models without communication bottlenecks.

Key innovations:

- Full liquid cooling: Reduces energy by 40% vs. air.

- 122-day ramp-up: Parallel assembly of racks.

- Real-time data ingestion: X's firehose for dynamic training.

For machine learning practitioners, this democratizes frontier AI indirectly—via xAI's API and open-sourcing ethos. Grok-2's availability on X Premium accelerates adoption.

Implications for Startups and the Ecosystem

As a startup valued at $24B, xAI exemplifies hyperscaling. Founders must now ponder: build your own silicon (Cerebras, Groq) or aggregate Nvidia? Colossus validates the latter for speed-to-market.

Cybersecurity angles emerge too: such clusters are prime targets. xAI invests in custom security fabrics to protect IP during training.

Broader ripple effects:

- Chip demand: Nvidia's market cap surges past $3.4T.

- Talent wars: xAI poaches from FAANG.

- Geopolitics: US leads China in GPU scale.

Looking Ahead: Grok-3 and Beyond

With Colossus online, xAI targets Grok-3 release imminently, promising agentic capabilities and video understanding. Musk hints at multi-modal supremacy, challenging Sora and Veo.

This isn't just hardware; it's a statement. In the AI gold rush, compute is king, and xAI just crowned itself. As 2024 closes, Colossus cements xAI as a top contender, pushing humanity toward AGI faster than ever.

TH Journal will monitor Grok-3 benchmarks and expansions closely.